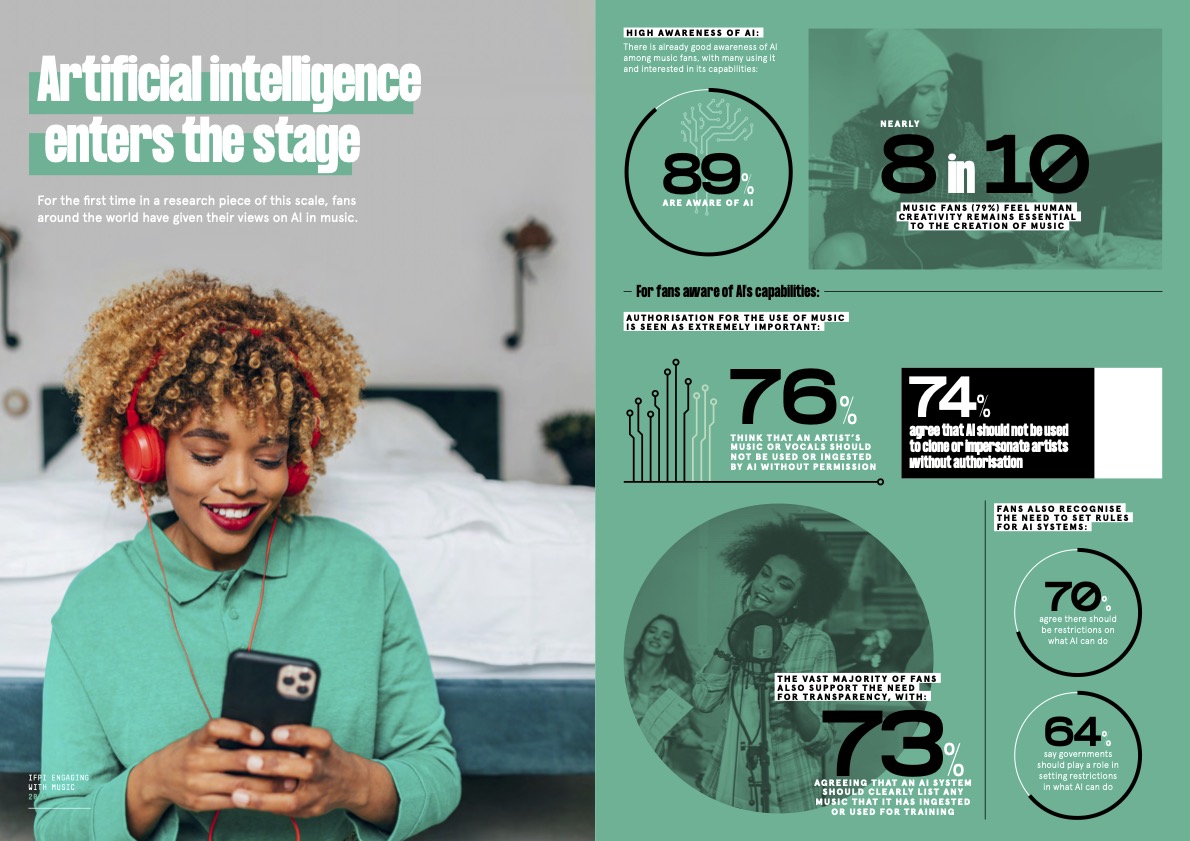

IFPI has shared findings on attitudes to artificial intelligence from the biggest study of music fans in the world.

The research comes from the forthcoming Engaging With Music 2023, IFPI’s global report examining how fans around the world engage with, and feel about, music. With responses from more than 43,000 people across 26 countries, the report is billed as the largest music study of its kind and the most detailed insight into fan thinking.

This year, for the first time, the report includes a section dedicated to artificial intelligence as the technology’s rapid advancement continues to present both opportunities and challenges for the music business and for artists. According to the survey, fans deeply value authenticity – 79% feel that human creativity remains essential to the creation of music.

For fans aware of generative AI’s ability to take and copy existing artists’ repertoire, authorisation for the use of any artist’s music is seen as absolutely non-negotiable: 76% feel that an artist’s music or vocals should not be used or ingested by AI without permission.

Furthermore, 74% agree that AI should not be used to clone or impersonate artists without authorisation. It follows high-profile cases involving fake versions of Drake and The Weeknd. However, YouTube Shorts has partnered with the industry on licensed AI cloning experiments.

The vast majority of fans also support the need for transparency, as 73% agree that an AI system should clearly list any music that it has used.

Frances Moore, IFPI’s chief executive, said: “While music fans around the world see both opportunities and threats for music from artificial intelligence, their message is clear: authenticity matters. In particular, fans believe that AI systems should only use music if pre-approved permission is obtained and that they should be transparent about the material ingested by their systems. These are timely reminders for policymakers as they consider how to implement standards for responsible and safe AI.”

Fans also recognise the need to set rules for AI systems, with 70% agreeing there should be restrictions on what AI can do. Among those surveyed, 64% say governments should play a role in setting restrictions in what AI can do.